Get In Touch

Phone

Email

Address

Follow us

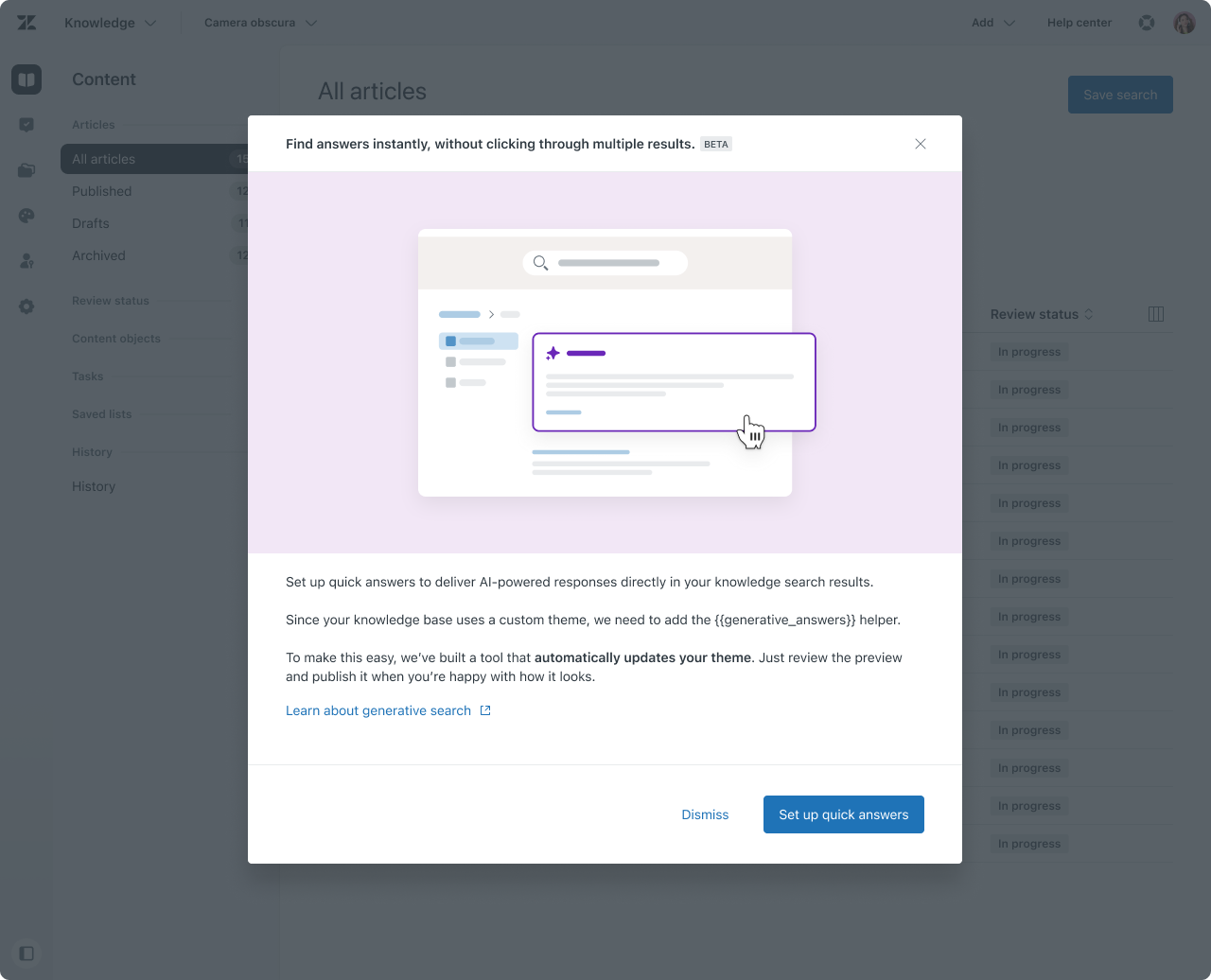

As Zendesk expanded its investment in Generative Search, adoption among customers with custom Help Center themes lagged behind. The core blocker was not feature value, but theme maintenance risk.

This project focused on designing and validating a safe, scalable way for customers to update custom themes, enabling faster adoption of AI features without requiring manual code changes or developer support.

Generative Search relies on a specific helper being present in the Help Center theme. While Zendesk’s standard themes had already been updated, the majority of customers relied on custom themes that could not be automatically modified without risk.

At the time, roughly 22,000 accounts were eligible for Generative Search, and about 18,000 of them used custom themes. Manual updates required developer time and carried production risk, which significantly slowed adoption.

Customers who wanted to enable Generative Search faced a difficult decision. Updating their theme manually meant touching production code they often didn’t fully understand, with no clear way to preview changes or recover if something went wrong.

For Zendesk, this created a gap between the potential of Generative Search and its real-world adoption. Solving this problem required more than clearer documentation, it required a fundamentally safer update model.

To move quickly and align cross-functionally, I planned and facilitated a one-week Google Design Sprint with Product, Engineering, and Design.

Sprint structure:

• Day 1: Problem framing, constraints, and technical walkthroughs

• Day 2: Customer journey mapping and “How Might We” exercises

• Day 3: Solution sketching and concept selection

• Day 4: Prototype in Figma (Lovable-assisted)

• Day 5: Internal critique and prep for customer validation

The sprint helped us map the current experience, surface technical constraints of Theme Center, and explore different ways of delivering updates without breaking customizations. Through sketching and critique, we tested ideas ranging from full auto-updates to step-by-step guidance.

By the end of the sprint, a clear pattern emerged: customers weren’t resistant to updates themselves, they were resistant to irreversible change.

Instead of treating theme updates as a replacement, we reframed them as a controlled migration.

The resulting concept, the Theme Updater, creates a copy of the customer’s existing theme and applies the necessary updates to that copy. Customers can then preview the updated theme, understand what changed, and decide when (or if) to publish it.

This approach shifts the experience from “trust us” to “verify first,” giving customers confidence while keeping their original theme intact.

We validated the concept through interactive prototypes and customer walkthroughs, including A/B testing two post-update flows: returning users to Theme Center versus taking them directly into preview mode.

Feedback consistently showed that confidence increased when customers could see changes before publishing and when rollback was clearly communicated. These insights directly informed the final flow, including preview states, success notifications, and messaging around safety.

The Quick Answers Theme Updater entered beta in November 2025 and reached general beta availability in December. It currently supports around 60% of custom themes, expanding as more patterns are validated.

I led EAP feedback collection through:

• Customer interviews

• Surveys

• Qualitative follow-ups with admins across different industries and AI maturity levels

Key insights shaped the roadmap:

• Admins value specific topics surfaced from real tickets, not abstract metrics

• ROI is compelling, but customers want localized and customizable assumptions

• Knowledge and AI Agent ownership often sit with different people, requiring better collaboration workflows

• After activation, admins want to understand impact of changes, not just predictions

These learnings directly informed post-adoption concepts such as refinement insights, escalation signals, and content impact visibility.

Designing for adoption is often about reducing perceived risk, not adding more capability. In this project, trust was built through reversibility, preview, and transparency, not automation alone.

Facilitating a design sprint early allowed us to align deeply across disciplines and uncover the real barrier behind slow adoption. It also helped turn a one-off feature request into a scalable pattern for future Help Center updates.

Most importantly, this work reinforced that AI features don’t fail because of intelligence, they fail when the surrounding system doesn’t make them safe to use.

© 2026 platzchen · All rights reserved

We use cookies to improve your experience on our site. By using our site, you consent to cookies.

Manage your cookie preferences below:

Essential cookies enable basic functions and are necessary for the proper function of the website.