Get In Touch

Phone

Email

Address

Follow us

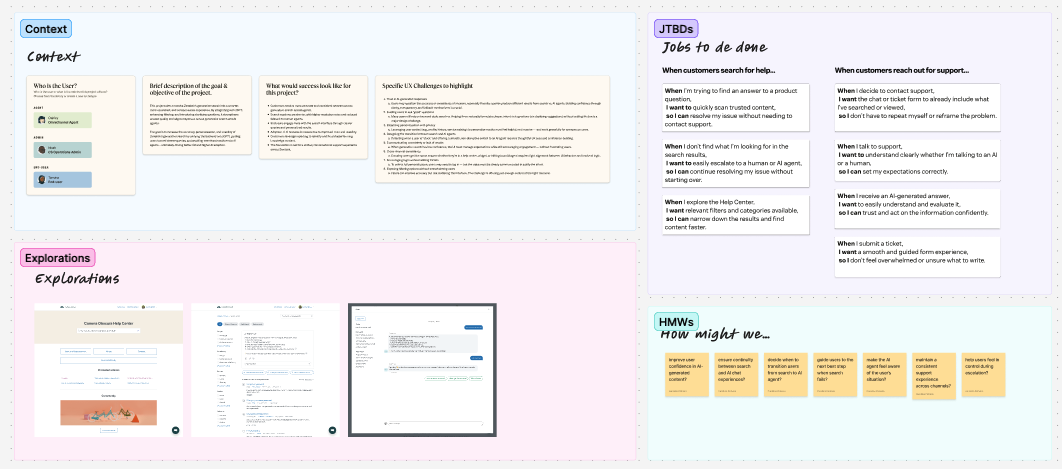

The Conversational Help Center reimagines self-service as a continuous, conversational experience, bridging traditional knowledge search, generative answers, and AI agents into a single, cohesive flow.

The goal of this project was to reduce fragmentation across support touchpoints by allowing customers to start with search, receive meaningful AI-generated answers, and seamlessly continue the conversation, without losing context or being forced into channel switches. In parallel, the project introduces Suggested Questions, enabling admins to guide customers toward better entry points for self-service based on real search behavior.

This work sat at the intersection of Knowledge, AI Agents, Messaging, and Search. I led design across discovery, concept exploration, validation, and early delivery planning, shaping both the end-user experience and the admin tooling required to make it scalable, configurable, and trustworthy.

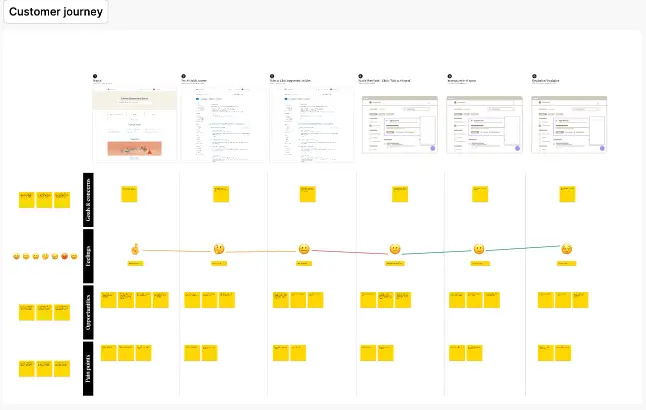

End users often start in a Help Center search, encounter static articles or partial answers, and then must decide whether to refine their query, switch to an AI agent, or escalate to another channel entirely. While these channels are unified on the agent side, the customer journey remains disjointed, with context frequently lost between steps.

At the same time, user expectations are shifting. Customers increasingly expect conversational, contextual support experiences similar to what they encounter in tools like ChatGPT or modern search engines. Traditional Help Centers struggle to meet these expectations, especially when queries are vague, poorly formed, or missing product context.

Admins face a parallel challenge: while generative search and AI agents are powerful, they lack tools to shape how customers enter the experience, leading to inconsistent results and lower self-service success.

The core problem wasn’t just discoverability, it was continuity, guidance, and confidence across the entire support journey.

A central design challenge was enabling a smooth transition from informational search to conversational support, without forcing users into an abrupt context switch.

Key decisions included:

• Treating Quick Answers as a bridge, not an endpoint

• Making the transition to AI agents feel like a continuation of the same interaction, with prior context preserved

• Exploring multiple patterns (full-page conversation, expanded widget, sidebar experiences) to balance scalability, immersion, and feasibility

The full-page conversational model emerged as the most future-proof, particularly for supporting multimodal interactions like voice, images, and rich sources, while still allowing pragmatic MVP entry points via widgets.

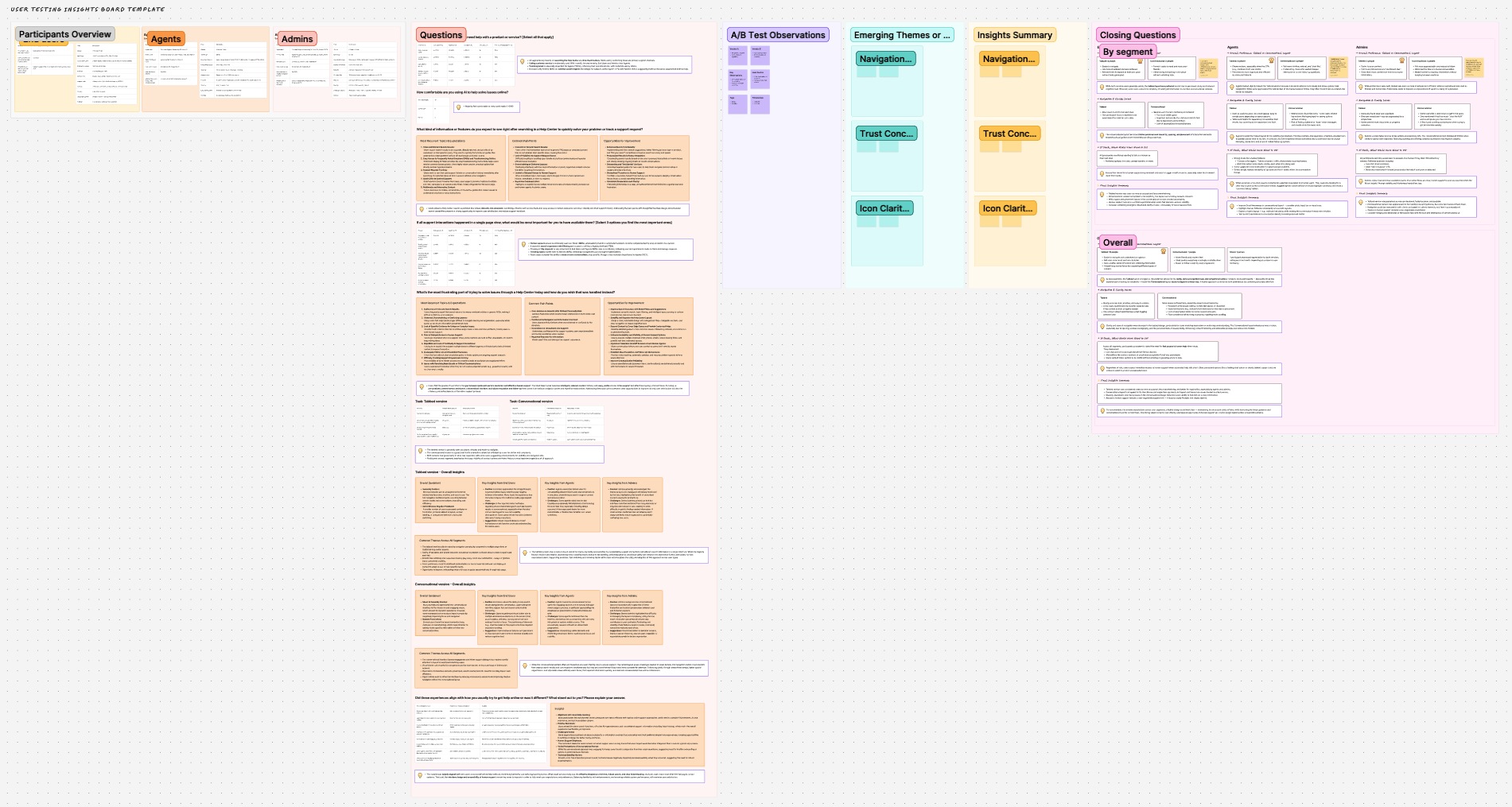

I led discovery and validation across research, workshops, and iterative testing, ensuring the experience was grounded in real user behavior while remaining feasible across teams.

This included unmoderated user testing with Support Operations and Knowledge admins to validate the Suggested Questions setup, language handling, selection flows, and overall clarity of the admin experience. In parallel, I tested core experience assumptions, such as widget-based versus full-page conversational patterns, to assess scalability, comprehension, and alignment with long-term goals like multimodal support.

I also facilitated cross-functional design workshops with designers and product partners to explore multiple interaction models, align on scope, and stress-test the vision against delivery constraints. These workshops helped narrow early explorations into clearer directions, align expectations across teams, and surface key assumptions that required validation before implementation.

Insights from testing and workshops directly informed layout, hierarchy, terminology, MVP scope, and sequencing, allowing us to move forward with confidence while continuing to refine the long-term conversational Help Center vision.

This project reinforced several core principles in my design practice:

• Conversational UX is a system design problem, not a single interface

• AI experiences require strong entry-point guidance, not just better answers

• Admin tooling must scale with complexity without transferring cognitive load to users

• Validation is most effective when it informs what to build now vs. what to envision next

• Designing for future capabilities (voice, multimodal) requires early architectural thinking, even in MVPs

The Conversational Help Center pushed me deeper into experience strategy, aligning long-term vision with near-term delivery, while keeping both customer and admin needs in balance.

© 2026 platzchen · All rights reserved

We use cookies to improve your experience on our site. By using our site, you consent to cookies.

Manage your cookie preferences below:

Essential cookies enable basic functions and are necessary for the proper function of the website.