Get In Touch

Phone

Email

Address

Follow us

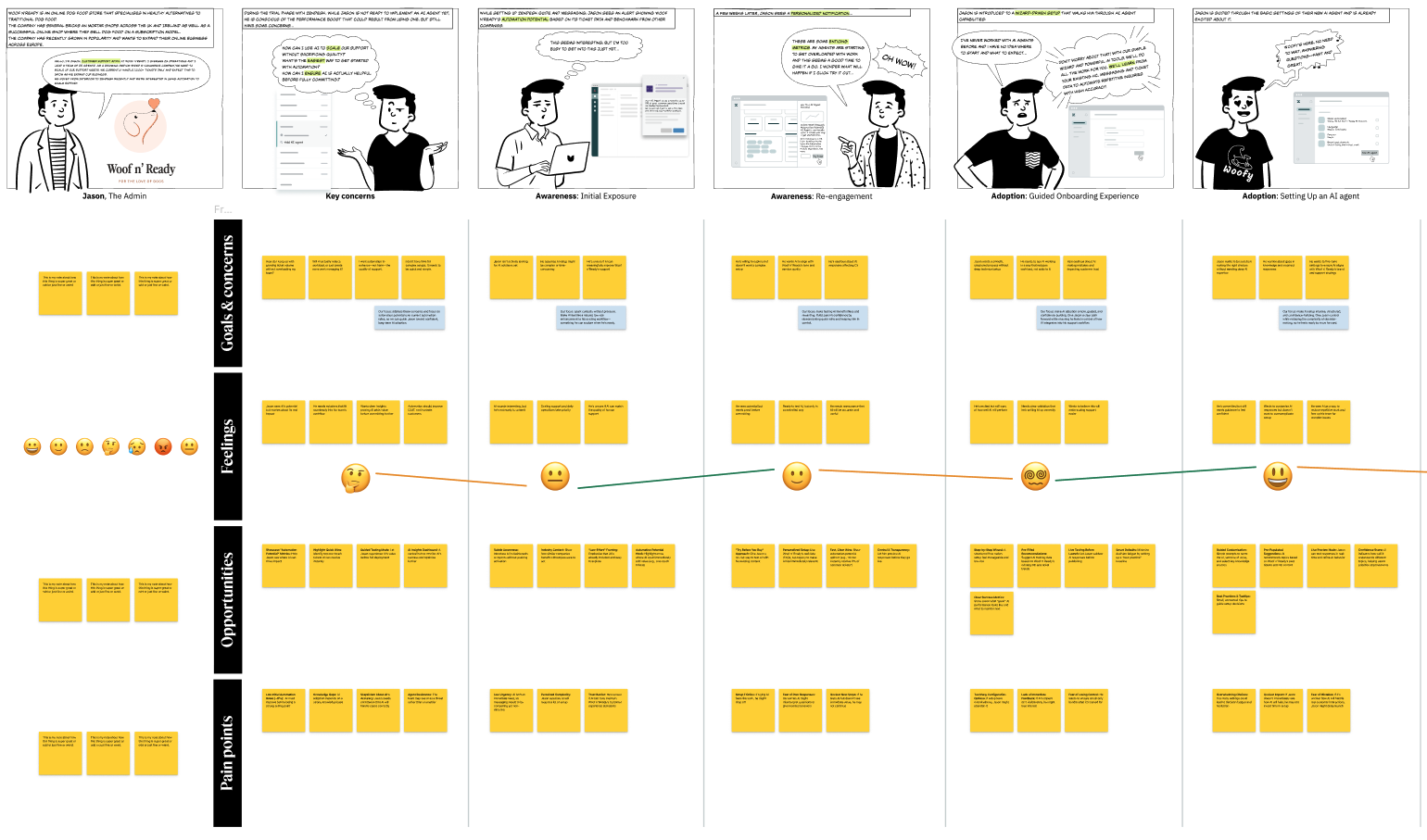

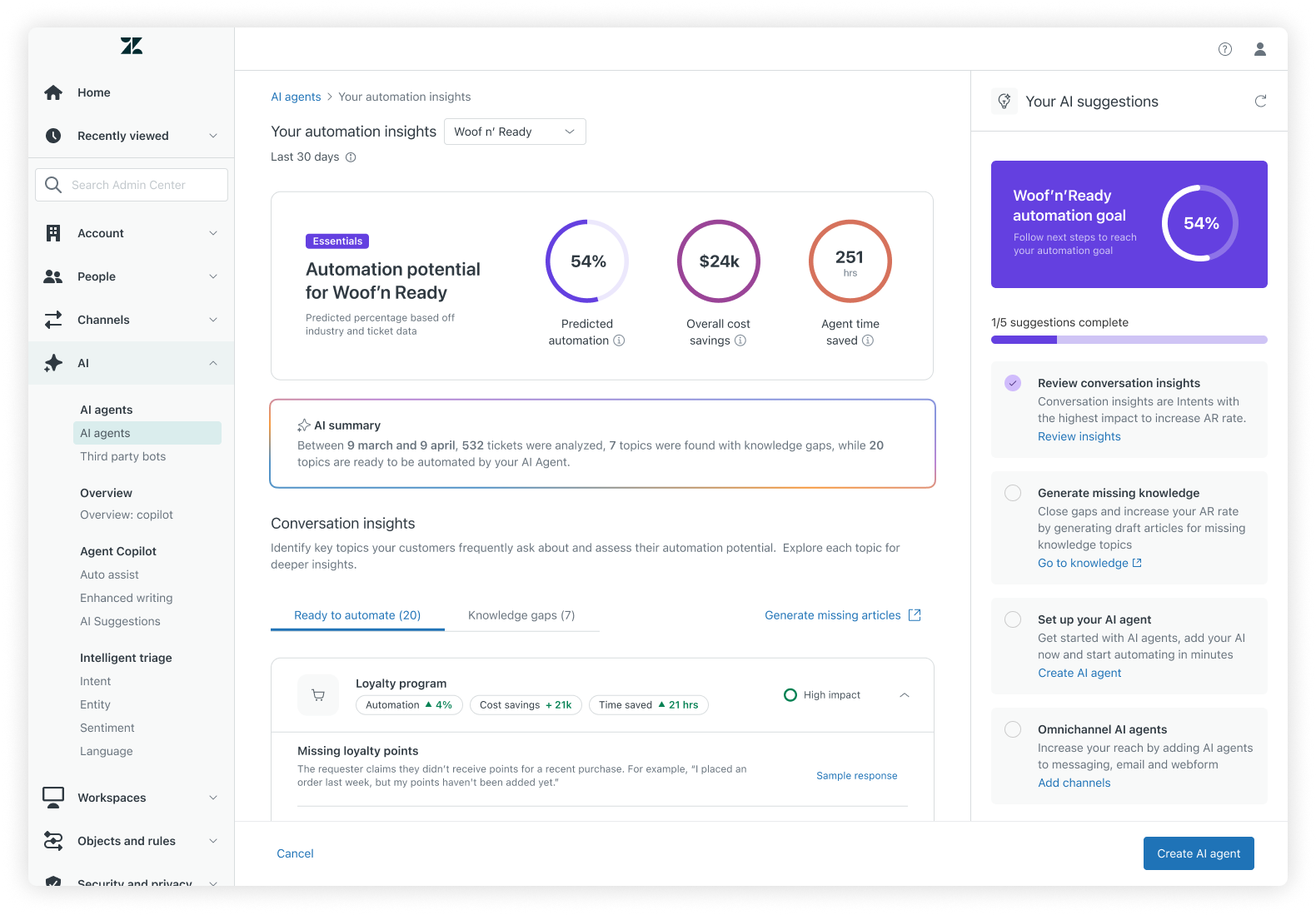

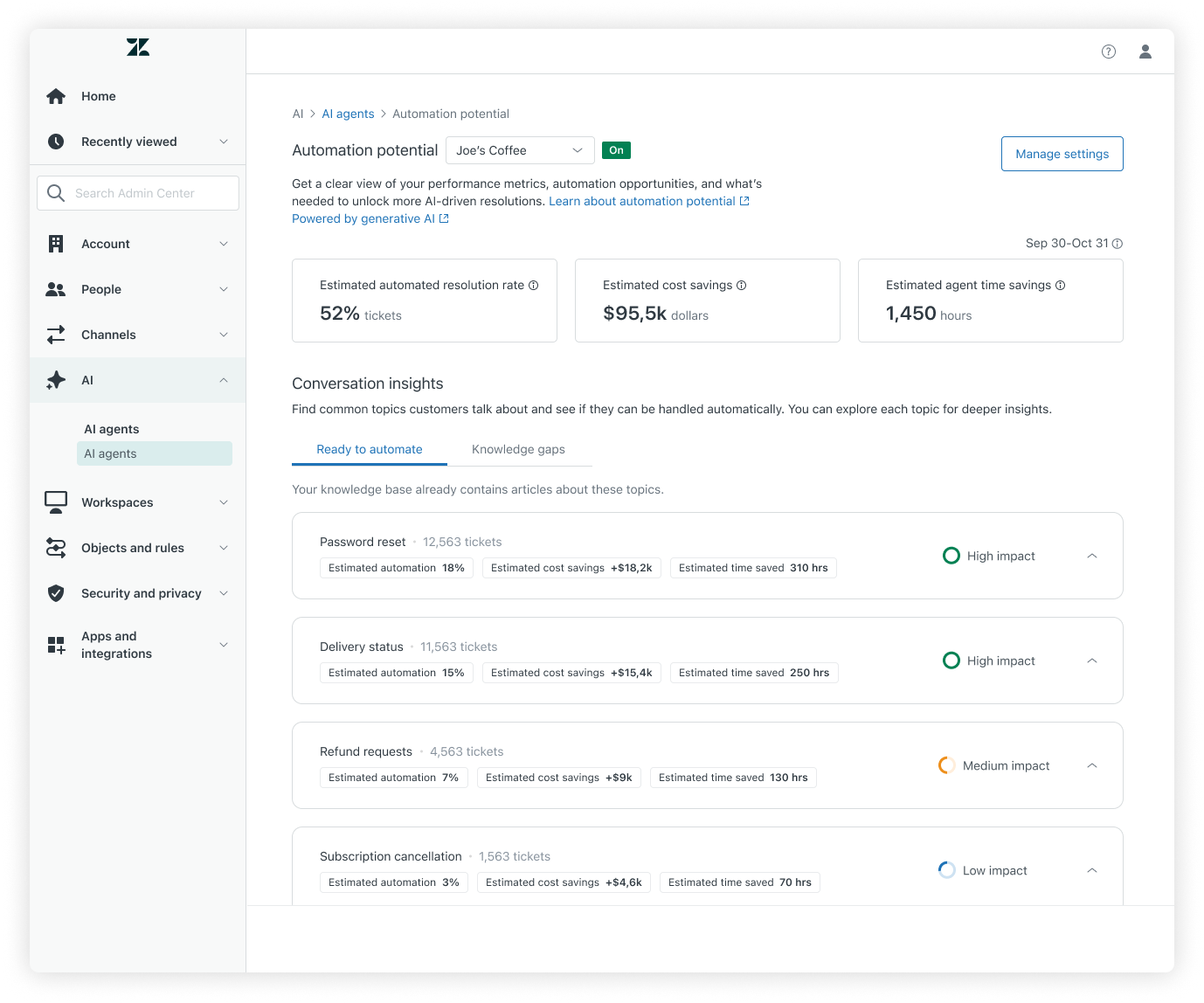

Automation Potential is a strategic analytics experience designed to help Zendesk admins understand where and how AI Agents can create real value before and after adoption. The goal was to bridge the gap between abstract AI promise and concrete operational decisions, by translating large volumes of support conversations into clear automation opportunities, cost and time savings, and next steps.

This project sat at the intersection of AI, analytics, and knowledge management, and required close collaboration across Product, Engineering, Data Science, Research, and other AI initiatives. I led the design effort from early discovery through EAP, shaping both the UX strategy and the product narrative for how admins evaluate, adopt, and improve AI Agents over time.

Admins consistently struggled to answer questions like:

• What can I automate today?

• Is it worth the investment?

• What should I do first?

• How do I know if my AI Agent is actually helping?

Existing tools focused on configuration or raw analytics, but not on decision-making. There was no single place where admins could confidently evaluate automation readiness, understand trade-offs, or connect insights to action.

The challenge was not just designing a dashboard, but creating a decision support experience that built trust in predictive data while remaining useful even after AI Agents were enabled.

The core UX challenge was transforming predictive signals (automation potential, cost savings, time savings) into something admins could act on, without overwhelming them or over-promising accuracy.

Key design decisions included:

• Separating insights into Ready to automate and Knowledge gaps, clarifying what can be automated now vs. what requires content improvements

• Framing metrics as directional and decision-supportive, not absolute truth

• Designing clear CTAs that map insights to realistic admin actions (create AI Agent, improve knowledge, share insights)

Because not all tickets could be classified at subtopic level, I worked through multiple display models with PM and Data Science. We landed on a pragmatic approach that:

• Shows topics consistently across tabs

• Reveals or hides subtopics based on knowledge coverage

• Clearly communicates limitations through UI copy, tooltips, and expectations

This allowed us to deliver value immediately while setting a clear path toward future ML maturity.

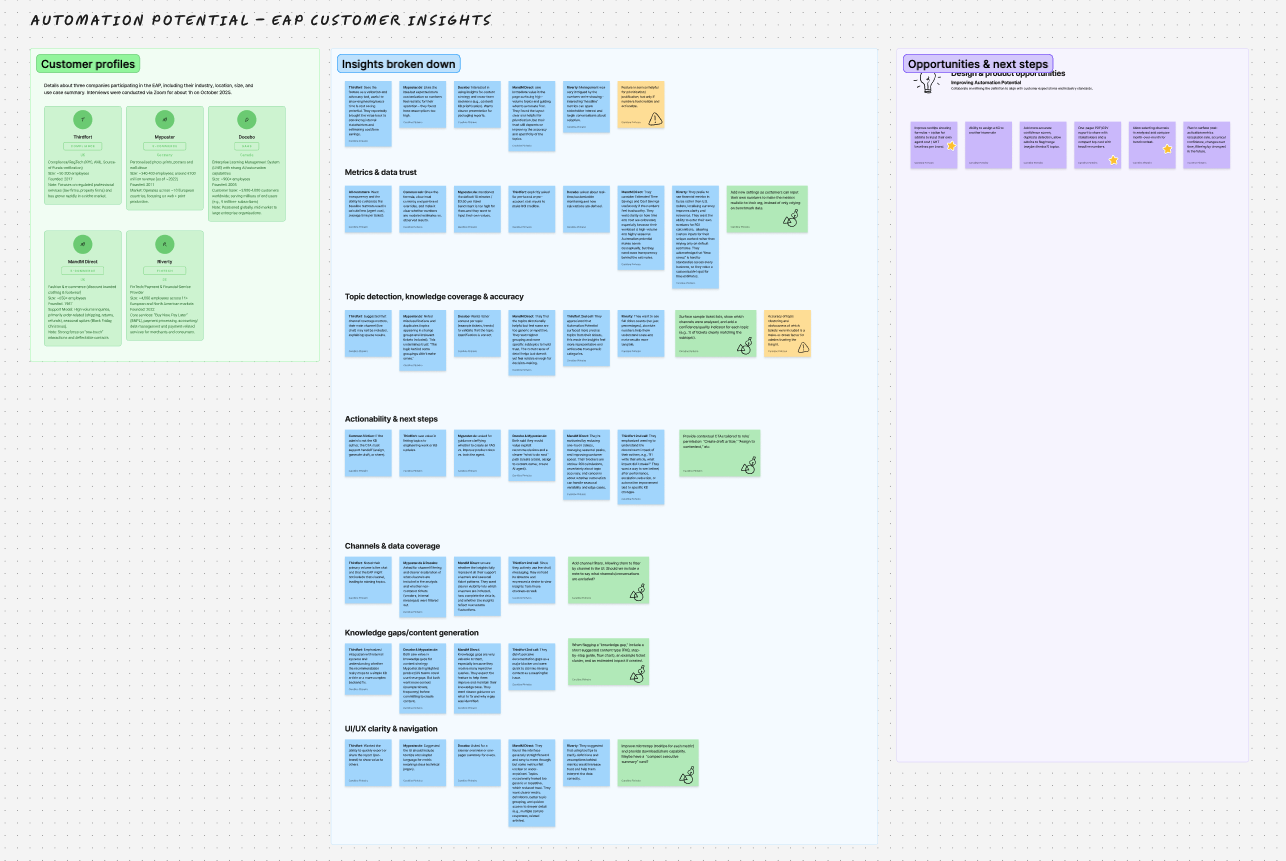

I led EAP feedback collection through:

• Customer interviews

• Surveys

• Qualitative follow-ups with admins across different industries and AI maturity levels

Key insights shaped the roadmap:

• Admins value specific topics surfaced from real tickets, not abstract metrics

• ROI is compelling, but customers want localized and customizable assumptions

• Knowledge and AI Agent ownership often sit with different people, requiring better collaboration workflows

• After activation, admins want to understand impact of changes, not just predictions

These learnings directly informed post-adoption concepts such as refinement insights, escalation signals, and content impact visibility.

This project reinforced and expanded how I operate as a senior designer:

• Designing AI experiences is as much about trust and communication as it is about UI

• Predictive systems require strong expectation-setting and transparent limitations

• Alignment across teams is critical when multiple products touch the same user decisions

• EAPs are most valuable when treated as learning systems, not validation checkpoints

Automation Potential pushed me further into strategic product thinking, balancing near-term feasibility with long-term vision—while keeping customer decision-making at the center.

© 2026 platzchen · All rights reserved

We use cookies to improve your experience on our site. By using our site, you consent to cookies.

Manage your cookie preferences below:

Essential cookies enable basic functions and are necessary for the proper function of the website.